neuraxle.base¶

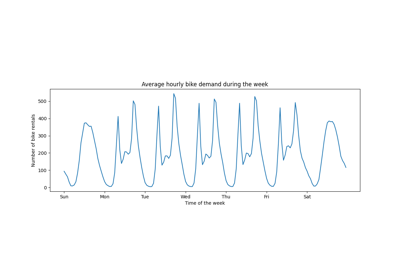

Module-level documentation for neuraxle.base. Here is an inheritance diagram, including dependencies to other base modules of Neuraxle:

Neuraxle’s Base Classes¶

This is the core of Neuraxle. They are worth noticing. Most classes inherit from these classes, composing them differently.

This package ensures proper respect of the Interface Segregation Principle (ISP), That is a SOLID principle of OOP programming suggesting to segregate interfaces. This is what is done here as the project gained in abstraction, and that the base classes needed to compose other base classes.

Classes

Any saver must inherit from this one. |

|

|

Base class for all services registred into the |

|

Base class for a transformer step that can also be fitted. |

|

Base class for a pipeline step that can only be transformed. |

alias of |

|

A step that can be evaluated with the scoring functions. |

|

|

Execution context object containing all of the pipeline hierarchy steps. |

This enum defines the execution mode of a |

|

This enum defines the execution phase of a |

|

|

This is like a news feed for pipelines where you post (log) info. |

An identity step which forces usage of handler methods. |

|

|

A step that automatically calls handle methods in the transform, fit, and fit_transform methods. |

|

A step that automatically calls handle methods in the transform, fit, and fit_transform methods. |

|

Identity step that can load the full dump of a pipeline step. |

Any step which inherit of this class will test globaly retrievable service assertion of itself and all its children on a will_process call. |

|

Is used to assert the presence of service at the start of the pipeline AND at execution time for a given step. |

|

A pipeline step that only requires the implementation of handler methods : |

|

|

A pipeline step that has no effect at all but to return the same data without changes. |

|

A step that has a default implementation for all handler methods. |

Saver that can save, or load a step with joblib.load, and joblib.dump. |

|

|

Is used to assert the presence of service at execution time for a given step |

|

A service containing other services. |

|

A mixin for services containing other services |

|

|

Custom saver for meta step mixin. |

|

|

A class to represent a step that wraps another step. |

Any steps/transformers within a pipeline that inherits of this class should implement BaseStep/BaseTransformer and initialize it before any mixin. |

|

Any steps/transformers within a pipeline that inherits of this class should implement BaseStep/BaseTransformer and initialize it before any mixin. |

|

A pipeline step that requires no fitting: fitting just returns self when called to do no action. |

|

A pipeline step that has no effect at all but to return the same data without changes. |

|

|

A step with context is a step that has an |

A pipeline step that only requires the implementation of _transform_data_container. |

|

Enum of the possible states of a trial. |

|

Step saver for a TruncableSteps. |

|

|

|

|

|

|

Step that contains multiple steps. |

|

A mixin for services that can be truncated. |

Examples using neuraxle.base.BaseStep¶

Examples using neuraxle.base.ExecutionContext¶

Examples using neuraxle.base.ForceHandleMixin¶

Examples using neuraxle.base.Identity¶

Examples using neuraxle.base.MetaStep¶

Examples using neuraxle.base.NonFittableMixin¶

Examples using neuraxle.base.NonTransformableMixin¶

-

class

neuraxle.base.BaseSaver[source]¶ Bases:

abc.ABCAny saver must inherit from this one. Some savers just save parts of objects, some save it all or what remains. Each :class`BaseStep` can potentially have multiple savers to make serialization possible.

-

save_step(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Save a step or a step’s parts using the execution context.

- Parameters

step – step to save

context – execution context

save_savers –

- Returns

-

can_load(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext)[source]¶ Returns true if we can load the given step with the given execution context.

- Parameters

step – step to load

context – execution context to load from

- Returns

-

load_step(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Load step with execution context.

- Parameters

step – step to load

context – execution context to load from

- Returns

loaded base step

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.JoblibStepSaver[source]¶ Bases:

neuraxle.base.BaseSaverSaver that can save, or load a step with joblib.load, and joblib.dump.

This saver is a good default saver when the object is already stripped out of things that would make it unserializable.

It is the default stripped_saver for the

ExecutionContext. The stripped saver is the first to load the step, and the last to save the step. The saver receives a stripped version of the step so that it can be saved by joblib.See also

-

can_load(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext) → bool[source]¶ Returns true if the given step has been saved with the given execution context.

- Return type

bool- Parameters

step – step that might have been saved

context – execution context

- Returns

if we can load the step with the given context

-

_get_step_path(context, step)[source]¶ Create step path for the given context.

- Parameters

context – execution context

step – step to save, or load

- Returns

path

-

save_step(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Saved step stripped out of things that would make it unserializable.

- Parameters

step – stripped step to save

context – execution context to save from

- Returns

-

load_step(step: neuraxle.base.BaseTransformer, context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Load stripped step.

- Parameters

step – stripped step to load

context – execution context to load from

- Returns

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.ExecutionMode[source]¶ Bases:

enum.EnumThis enum defines the execution mode of a

BaseStep. It is available in theExecutionContextasexecution_mode.

-

class

neuraxle.base.ExecutionPhase[source]¶ Bases:

enum.EnumThis enum defines the execution phase of a

BaseStep. It is available in theExecutionContextasexecution_mode.

-

class

neuraxle.base.MixinForBaseService[source]¶ Bases:

objectAny steps/transformers within a pipeline that inherits of this class should implement BaseStep/BaseTransformer and initialize it before any mixin. This class checks that its the case at initialization.

-

class

neuraxle.base._RecursiveArguments(ra=None, args: Union[List[Any], List[neuraxle.hyperparams.space.RecursiveDict]] = None, kwargs: Union[Dict[str, Any], neuraxle.hyperparams.space.RecursiveDict] = None, current_level: int = 0)[source]¶ Bases:

objectThis class is used by

apply(), and_HasChildrenMixinto pass the right arguments to steps with children.Two types of arguments: - args: arguments that are not named - kwargs: arguments that are named

For the values of both args and kwargs, we use either values or recursive values: - value is not RecursiveDict: the value is replicated and passed to each sub step. - value is RecursiveDict: the value is sliced accordingly and decomposed into the next levels.

As a shorthand, if another _RecursiveArguments (ra) is passed as an argument, it is used almost as is to merge different ways of using ra: using a past ra, or else some args.

See also

_HasChildrenMixin,get_hyperparams_space(),set_hyperparams_space(),update_hyperparams_space(),get_hyperparams(),set_hyperparams(),update_hyperparams(),get_config(),set_config(),update_config(),invalidate()

-

class

neuraxle.base._HasRecursiveMethods(name: str = None)[source]¶ Bases:

objectAn internal class to represent a step that has recursive methods. The apply

apply()function is used to apply a method to a step and its children.Example usage :

class _HasHyperparams: # ... def set_hyperparams(self, hyperparams: Union[HyperparameterSamples, Dict]) -> HyperparameterSamples: self.apply(method='_set_hyperparams', hyperparams=HyperparameterSamples(hyperparams)) return self def _set_hyperparams(self, hyperparams: Union[HyperparameterSamples, Dict]) -> HyperparameterSamples: self._invalidate() hyperparams = HyperparameterSamples(hyperparams) self.hyperparams = hyperparams if len(hyperparams) > 0 else self.hyperparams return self.hyperparams pipeline = Pipeline([ SomeStep() ]) pipeline.set_hyperparams(HyperparameterSamples({ 'learning_rate': 0.1, 'SomeStep__learning_rate': 0.05 }))

-

set_name(name: str) → neuraxle.base.BaseService[source]¶ Set the name of the service.

- Parameters

name (

str) – a string.- Returns

self

Note

A step name is in the keys of

steps_as_tuple

-

get_name() → str[source]¶ Get the name of the service.

- Returns

the name, a string.

Note

A step name is the same value as the one in the keys of

Pipeline.steps_as_tuple

-

getattr(attr_name: str) → neuraxle.hyperparams.space.RecursiveDict[source]¶ Get an attribute of the service or step, if it exists, returned as a

RecursiveDict.- Return type

- Parameters

attr_name (

str) – the name of the attribute to get in each step or service.- Returns

A RecursiveDict with terminal leafs like

RecursiveDict({attr_name: getattr(self, attr_name)}).

-

_getattr(attr_name: str) → RecursiveDict[str, str][source]¶ Get an attribute if it exists, as a RecursiveDict({attr_name: getattr(self, attr_name)}).

-

apply(method: Union[str, Callable], ra: neuraxle.base._RecursiveArguments = None, *args, **kwargs) → neuraxle.hyperparams.space.RecursiveDict[source]¶ Apply a method to a step and its children.

Here is an apply usage example to invalidate each steps. This example comes from the saving logic:

# preparing to save steps and its nested children: if full_dump: # initialize and invalidate steps to make sure that all steps will be saved def _initialize_if_needed(step): if not step.is_initialized: step._setup(context=context) if not step.is_initialized: raise NotImplementedError(f"The `setup` method of the following class " f"failed to set `self.is_initialized` to True: {step.__class__.__name__}.") return RecursiveDict() def _invalidate(step): step._invalidate() return RecursiveDict() self.apply(method=_initialize_if_needed) self.apply(method=_invalidate) # save steps: ...

Here is another example. For instance, when setting the hyperparams space of a step, we use

_set_hyperparams_space()to set the hyperparams of the step. The trick is that the space argumentHyperparameterSpaceis a recursive dict. The implementation is the same for setting the hyperparams and config of the step and its children, not only its space. The cool thing is that such hyperparameter spaces are recursive, inheriting fromRecursiveDict. and applying recursive arguments to the step and its children with the_HasChildrenMixin.apply()of_HasChildrenMixin. Here is the implementation, using apply:def set_hyperparams_space(self, hyperparams_space: HyperparameterSpace) -> 'BaseTransformer': self.apply(method='_set_hyperparams_space', hyperparams_space=HyperparameterSpace(hyperparams_space)) return self def _set_hyperparams_space(self, hyperparams_space: Union[Dict, HyperparameterSpace]) -> HyperparameterSpace: self._invalidate() self.hyperparams_space = HyperparameterSpace(hyperparams_space) return self.hyperparams_space

- Return type

- Parameters

method – method name that need to be called on all steps

ra (

_RecursiveArguments) – recursive argumentsargs – any additional arguments to be passed to the method

kwargs – any additional positional arguments to be passed to the method

- Returns

method outputs, or None if no method has been applied

See also

-

-

class

neuraxle.base._HasConfig(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None)[source]¶ Bases:

abc.ABCAn internal class to represent a step that has config params. This is useful to store the config of a step.

A config

RecursiveDictconfig attribute is used when you don’t want to use aHyperparameterSamplesattribute. The reason sometimes is that you don’t want to tune your config, whereas hyperparameters are used to tune your step in the AutoML from hyperparameter spaces, such as using hyperopt.A good example of a config parameter would be the number of threads, or an API key loaded from the OS’ environment variables, since they won’t be tuned but are changeable from the outside.

Thus, this class looks a lot like

_HasHyperparamsandHyperparameterSpace.See also

BaseStep,BaseTransformer,_HasHyperparams,_HasHyperparamsSpace,HyperparameterSpace,HyperparameterSamples,RecursiveDict-

__init__(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_config(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict]) → neuraxle.base.BaseTransformer[source]¶ Set step config. See

set_hyperparams()for more usage examples and documentation, it works the same way.

-

_set_config(config: neuraxle.hyperparams.space.RecursiveDict) → neuraxle.hyperparams.space.RecursiveDict[source]¶

-

update_config(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict]) → neuraxle.base.BaseTransformer[source]¶ Update the step config variables without removing the already-set config variables. This method is similar to

update_hyperparams(). Refer to it for more documentation and usage examples, it works the same way.

-

_update_config(config: neuraxle.hyperparams.space.RecursiveDict) → neuraxle.hyperparams.space.RecursiveDict[source]¶

-

get_config() → neuraxle.hyperparams.space.RecursiveDict[source]¶ Get step config. Refer to

get_hyperparams()for more documentation and usage examples, it works the same way.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.BaseService(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None, name: str = None)[source]¶ Bases:

neuraxle.base._HasConfig,neuraxle.base._HasRecursiveMethods,abc.ABCBase class for all services registred into the

ExecutionContext.See also

-

__init__(config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None, name: str = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base._HasChildrenMixin[source]¶ Bases:

neuraxle.base.MixinForBaseService,typing.GenericMixin to add behavior to the steps that have children (sub steps).

-

apply(method: Union[str, Callable], ra: neuraxle.base._RecursiveArguments = None, *args, **kwargs) → neuraxle.hyperparams.space.RecursiveDict[source]¶ Apply method to root, and children steps. Split the root, and children values inside the arguments of type RecursiveDict.

This method overrides the

apply()method of_HasRecursiveMethods. Read the documentation of the original method to learn more.Read more: Steps containing other steps.

- Return type

- Parameters

method – str or callable function to apply

ra (

_RecursiveArguments) – recursive arguments

- Returns

-

_apply_self(method: Union[str, Callable], ra: neuraxle.base._RecursiveArguments) → neuraxle.hyperparams.space.RecursiveDict[source]¶

-

_apply_childrens(results: neuraxle.hyperparams.space.RecursiveDict, method: Union[str, Callable], ra: neuraxle.base._RecursiveArguments) → neuraxle.hyperparams.space.RecursiveDict[source]¶

-

_validate_children_exists(ra)[source]¶ Validate that the provided childrens are in self, and if not, raise an error.

-

get_children() → List[BaseServiceT][source]¶ Get the list of all the childs for that step or service.

- Returns

every child steps

-

-

class

neuraxle.base.MetaServiceMixin(wrapped: BaseServiceT)[source]¶ Bases:

neuraxle.base._HasChildrenMixinA mixin for services containing other services

-

__init__(wrapped: BaseServiceT)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_step(step: BaseServiceT) → BaseServiceT[source]¶ Set wrapped step to the given step.

- Parameters

step – new wrapped step

- Returns

self

-

get_children() → List[BaseServiceT][source]¶ Get the list of all the childs for that step.

_HasChildrenMixincalls this method to apply methods to all of the childs for that step.- Returns

list of child steps

See also

-

-

class

neuraxle.base.MetaService(wrapped: BaseServiceT = None, config: neuraxle.hyperparams.space.RecursiveDict = None, name: str = None)[source]¶ Bases:

neuraxle.base.MetaServiceMixin,neuraxle.base.BaseServiceA service containing other services.

-

__init__(wrapped: BaseServiceT = None, config: neuraxle.hyperparams.space.RecursiveDict = None, name: str = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.TruncableServiceMixin(services: Dict[Union[str, Type[BaseServiceT]], BaseServiceT])[source]¶ Bases:

neuraxle.base._HasChildrenMixin-

__init__(services: Dict[Union[str, Type[BaseServiceT]], BaseServiceT])[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

register_service(service_type: Union[str, Type[BaseServiceT]], service_instance: BaseServiceT) → neuraxle.base.ExecutionContext[source]¶ Register base class instance inside the services. This is useful to register services. Make sure the service is an instance of the class

BaseService.- Parameters

service_type – base type

service_instance – instance

- Returns

self

-

get_services() → Dict[Union[str, Type[BaseServiceT]], BaseServiceT][source]¶ Get the registered instances in the services.

- Returns

self

-

get_service(service_type: Union[str, Type[BaseServiceT]]) → object[source]¶ Get the registered instance for the given abstract class

BaseServicetype. It is common to use service types as keys in the services dictionary.- Return type

object- Parameters

service_type – service type

- Returns

self

-

has_service(service_type: Union[str, Type[BaseServiceT]]) → bool[source]¶ Return a bool indicating if the service has been registered.

- Return type

bool- Parameters

service_type – base type

- Returns

if the service registered or not

-

get_children() → List[BaseServiceT][source]¶ Get the list of all the childs for that step.

_HasChildrenMixincalls this method to apply methods to all of the childs for that step.- Returns

list of child steps

See also

-

-

class

neuraxle.base.TruncableService(services: Dict[Type[BaseServiceT], BaseServiceT] = None)[source]¶ Bases:

neuraxle.base.TruncableServiceMixin,neuraxle.base.BaseService-

__init__(services: Dict[Type[BaseServiceT], BaseServiceT] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.Flow(context: Optional[neuraxle.base.ExecutionContext] = None)[source]¶ Bases:

neuraxle.base.BaseServiceThis is like a news feed for pipelines where you post (log) info. Flow is a step that can be used to store the status, metrics, logs, and other information of the execution of the current run.

Concrete implementations of this object may interact with repositories.

-

__init__(context: Optional[neuraxle.base.ExecutionContext] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

link_context(context: neuraxle.base.ExecutionContext) → neuraxle.base.Flow[source]¶ Link the context to the flow with a weak ref.

-

logger¶

-

log_success(best_val_score: float = None, n_epochs_to_val_score: int = None, metric_name: str = None)[source]¶

-

log_best_hps(main_metric_name, best_hps: neuraxle.hyperparams.space.HyperparameterSamples, avg_validation_score: float, avg_n_epoch_to_best_validation_score: int)[source]¶

-

log_error(exception: Exception)[source]¶ Log an exception or error. The stack trace is logged as well.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.ExecutionContext(root: str = None, flow: neuraxle.base.Flow = None, execution_phase: neuraxle.base.ExecutionPhase = <ExecutionPhase.UNSPECIFIED: None>, execution_mode: neuraxle.base.ExecutionMode = <ExecutionMode.FIT_OR_FIT_TRANSFORM_OR_TRANSFORM: 'fit_or_fit_transform_or_transform'>, stripped_saver: neuraxle.base.BaseSaver = None, parents: List[BaseStep] = None, services: Dict[Union[str, Type[BaseServiceT]], BaseServiceT] = None)[source]¶ Bases:

neuraxle.base.TruncableServiceExecution context object containing all of the pipeline hierarchy steps. First item in execution context parents is root, second is nested, and so on. This is like a stack. It tracks the current step, the current phase, the current execution mode, and the current saver, as well as other information such as the current path and other caching information.

For instance, it is used in the handle_fit and handle_transform methods of the

BaseStepas follows:handle_fit(),handle_transform().This class can save and load steps using

BaseSaverobjects and the given root saving path.Like a service locator, it is used to access some registered services to be made available to the pipeline at every step when they process data. If pipeline steps are composed like a tree, the execution context is used to pass information between steps. Thus, some domain services can be registered in the execution context, and then used by the pipeline steps.

One could design a lazy data loader that loads data only when needed, and have only the data IDs pass into the pipeline steps prior to hitting a step that needs the data and loads it when needed.

This way, a cache and several other contextual services can be used to store the data IDs and the data.

The

AutoMLclass is an example of a step that uses this execution context extensively.-

__init__(root: str = None, flow: neuraxle.base.Flow = None, execution_phase: neuraxle.base.ExecutionPhase = <ExecutionPhase.UNSPECIFIED: None>, execution_mode: neuraxle.base.ExecutionMode = <ExecutionMode.FIT_OR_FIT_TRANSFORM_OR_TRANSFORM: 'fit_or_fit_transform_or_transform'>, stripped_saver: neuraxle.base.BaseSaver = None, parents: List[BaseStep] = None, services: Dict[Union[str, Type[BaseServiceT]], BaseServiceT] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

logger¶

-

flow¶ Flow is a service that is used to log information about the execution of the pipeline.

-

set_execution_phase(phase: neuraxle.base.ExecutionPhase) → neuraxle.base.ExecutionContext[source]¶ Set the instance’s execution phase to given phase from the enum

ExecutionPhase.- Parameters

phase (

ExecutionPhase) – execution phase- Returns

self

-

set_service_locator(services: Dict[Union[str, Type[BaseServiceT]], BaseServiceT]) → neuraxle.base.ExecutionContext[source]¶ Register abstract class type instances that inherit and implement the class

BaseService.- Parameters

services – A dictionary of concrete services to register.

- Returns

self

-

get_execution_mode() → neuraxle.base.ExecutionMode[source]¶ Get the instance’s execution mode from the enum

ExecutionMode.

-

save(full_dump=True)[source]¶ Save all unsaved steps in the parents of the execution context using

save(). This method is called from a step checkpointer inside aCheckpoint.- Parameters

full_dump (

bool) – save full pipeline dump to be able to load everything without source code (false by default).- Returns

-

should_save_last_step() → bool[source]¶ Returns True if the last step should be saved.

- Returns

if the last step should be saved

-

pop_item() → neuraxle.base.BaseTransformer[source]¶ Change the execution context to be the same as the latest parent context.

- Returns

-

pop() → bool[source]¶ Pop the context. Returns True if it successfully popped an item from the parents list.

- Returns

if an item has been popped

-

push(step: neuraxle.base.BaseTransformer) → neuraxle.base.ExecutionContext[source]¶ Pushes a step in the parents of the execution context.

- Parameters

step – step to add to the execution context

- Returns

self

-

validation() → neuraxle.base.ExecutionContext[source]¶ Set the context’s execution phase to validation.

-

thread_safe() → neuraxle.base.ExecutionContext[source]¶ Prepare the context and its services to be thread safe

- Returns

a tuple of the recursive dict to apply within thread, and the thread safe context

-

process_safe() → neuraxle.base.ExecutionContext[source]¶ Prepare the context and its services to be process safe and reduce the current context for parallelization.

It also does some pickling checks on the services for them to avoid deadlocking the multithreading queues by having picklables (parallelizeable) services.

- Returns

a tuple of the recursive dict to apply within thread, and the thread safe context

-

get_path(is_absolute: bool = True)[source]¶ Creates the directory path for the current execution context.

The returned context path is the concatenation of: - the root path, - the AutoML trial subpath info, - the parent steps subpath info.

- Parameters

is_absolute (

bool) – bool to say if we want to add root to the path or not- Returns

current context path

-

get_identifier(include_step_names: bool = True) → str[source]¶ Get an identifier depending on the ScopedLocation of the current context.

Useful for logging. Example:

Example: “neuraxle.default_project.default_client.0” + “.”.join(self.get_names())

See also

-

get_names() → List[str][source]¶ Returns a list of the parent names.

- Returns

list of parents step names

-

load(path: str) → neuraxle.base.BaseTransformer[source]¶ Load full dump at the given path.

- Parameters

path (

str) – pipeline step path- Returns

loaded step

See also

-

to_identity() → neuraxle.base.ExecutionContext[source]¶ Create a fake execution context containing only identity steps. Create the parents by using the path of the current execution context.

- Returns

fake identity execution context

See also

-

_abc_impl= <_abc_data object>¶

-

-

neuraxle.base.CX[source]¶ alias of

neuraxle.base.ExecutionContext

-

class

neuraxle.base._HasSetupTeardownLifecycle[source]¶ Bases:

neuraxle.base.MixinForBaseServiceStep that has a setup and a teardown lifecycle methods.

Note

All heavy initialization logic should be done inside the setup method (e.g.: things inside GPU), and NOT in the constructor of your steps.

-

copy(context: neuraxle.base.ExecutionContext = None, deep=True) → neuraxle.base._HasSavers[source]¶ Copy the step.

- Parameters

deep (

bool) – if True, copy the savers as well- Returns

a copy of the step

-

setup(context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Initialize the step before it runs. Only from here and not before that heavy things should be created (e.g.: things inside GPU), and NOT in the constructor.

The _setup method is executed only if is self.is_initialized is False A setup function should set the self.is_initialized to True when called.

Warning

This setup method sets up the whole hierarchy of nested steps with children. If you want to setup progressively, use only self._setup() instead. The _setup method is called once for each step when handle_fit, handle_fit_transform or handle_transform is called.

- Parameters

context – execution context

- Returns

self

-

_setup(context: Optional[neuraxle.base.ExecutionContext] = None) → Optional[neuraxle.hyperparams.space.RecursiveDict][source]¶ Internal method to setup the step. May be used by

Pipelineto setup the pipeline progressively instead of all at once.

-

-

class

neuraxle.base._TransformerStep[source]¶ Bases:

neuraxle.base.MixinForBaseServiceAn internal class to represent a step that can be transformed, or inverse transformed. See

BaseTransformer, for the complete transformer step that can be used inside aneuraxle.pipeline.Pipeline. SeeBaseStep, for a step that can also be fitted inside aneuraxle.pipeline.Pipeline.Every step must implement

transform(). If a step is not transformable, you can inherit fromNonTransformableMixin.- Every transformer step has handle methods that can be overridden to add side effects or change the execution flow based on the execution context, and the data container :

See also

-

_will_process(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], neuraxle.base.ExecutionContext][source]¶ Apply side effects before any step method. :type context:

ExecutionContext:param data_container: data container :param context: execution context :return: (data container, execution context)

-

handle_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Override this to add side effects or change the execution flow before (or after) calling *

transform().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

transformed data container

-

_will_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], neuraxle.base.ExecutionContext][source]¶ Apply side effects before transform.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

_will_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], neuraxle.base.ExecutionContext][source]¶ This method is deprecated and will redirect to _will_transform. Use _will_transform instead.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Transform data container.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

data container

-

transform(data_inputs: DIT) → DIT[source]¶ Transform given data inputs.

- Parameters

data_inputs – data inputs

- Returns

transformed data inputs

-

_did_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Apply side effects after transform.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

data container

-

_did_process(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Apply side effects after any step method.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

handle_fit(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseTransformer[source]¶ Override this to add side effects or change the execution flow before (or after) calling

fit().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

See also

-

handle_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseTransformer, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶ Override this to add side effects or change the execution flow before (or after) calling *

fit_transform().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

-

fit(data_inputs: DIT, expected_outputs: EOT) → neuraxle.base._TransformerStep[source]¶ Fit given data inputs. By default, a step only transforms in the fit transform method. To add fitting to your step, see class:_FittableStep for more info. :param data_inputs: data inputs :param expected_outputs: expected outputs to fit on :return: transformed data inputs

-

fit_transform(data_inputs: DIT, expected_outputs: EOT = None) → Tuple[neuraxle.base._TransformerStep, DIT][source]¶ Fit transform given data inputs. By default, a step only transforms in the fit transform method. To add fitting to your step, see class:_FittableStep for more info.

- Parameters

data_inputs – data inputs

expected_outputs – expected outputs to fit on

- Returns

transformed data inputs

-

handle_predict(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Handle_transform in test mode.

- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

transformed data container

-

predict(data_input: DIT) → DIT[source]¶ Predict the expected output in test mode using func:~neuraxle.base._TransformerStep.transform, but by setting self to test mode first and then reverting the mode.

- Parameters

data_input – data input to predict

- Returns

prediction

-

handle_inverse_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT][source]¶ Override this to add side effects or change the execution flow before (or after) calling

inverse_transform().- Parameters

data_container – the data container to inverse transform

context (

ExecutionContext) – execution context

- Returns

data_container

See also

-

_inverse_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT][source]¶

-

inverse_transform(processed_outputs: DIT) → DIT[source]¶ Inverse Transform the given transformed data inputs.

p = Pipeline([MultiplyByN(2)]) _in = np.array([1, 2]) _out = p.transform(_in) _regenerated_in = p.inverse_transform(_out) assert np.array_equal(_regenerated_in, _in) assert np.array_equal(_out, _in * 2)

- Parameters

processed_outputs – processed data inputs

- Returns

inverse transformed processed outputs

-

class

neuraxle.base._FittableStep[source]¶ Bases:

neuraxle.base.MixinForBaseServiceAn internal class to represent a step that can be fitted. See

BaseStep, for a complete step that can be transformed, and fitted inside aneuraxle.pipeline.Pipeline.See also

-

handle_fit(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseStep[source]¶ Override this to add side effects or change the execution flow before (or after) calling

fit().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

-

_will_fit(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], neuraxle.base.ExecutionContext][source]¶ Before fit is called, apply side effects on the step, the data container, or the execution context.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

_fit_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base._FittableStep[source]¶ Fit data container.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(fitted self, data container)

-

_did_fit(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT][source]¶ Apply side effects before fit is called.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

fit(data_inputs: DIT, expected_outputs: EOT) → neuraxle.base._FittableStep[source]¶ Fit data inputs on the given expected outputs.

- Parameters

data_inputs – data inputs

expected_outputs – expected outputs to fit on.

- Returns

self

-

handle_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶ Override this to add side effects or change the execution flow before (or after) calling *

fit_transform().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

-

_will_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], neuraxle.base.ExecutionContext][source]¶ Apply side effects before fit_transform is called.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(data container, execution context)

-

_fit_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶ Fit transform data container.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(fitted self, data container)

-

fit_transform(data_inputs, expected_outputs=None) → Tuple[neuraxle.base.BaseStep, Any][source]¶ Fit, and transform step with the given data inputs, and expected outputs.

- Parameters

data_inputs – data inputs

expected_outputs – expected outputs to fit on

- Returns

(fitted self, tranformed data inputs)

-

_did_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Apply side effects after fit transform.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

(fitted self, data container)

-

-

class

neuraxle.base._CustomHandlerMethods[source]¶ Bases:

neuraxle.base.MixinForBaseServiceA class to represent a step that needs to add special behavior on top of the normal handler methods. It allows the step to apply side effects before calling the real handler method.

- Apply additional behavior (mini-batching, parallel processing, etc.) before calling the internal handler methods :

-

handle_fit(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseStep[source]¶ Handle fit with a custom handler method for fitting the data container. The custom method to override is fit_data_container. The custom method fit_data_container replaces _fit_data_container.

- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

See also

DataContainer,ExecutionContext

-

handle_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶ Handle fit_transform with a custom handler method for fitting, and transforming the data container. The custom method to override is fit_transform_data_container. The custom method fit_transform_data_container replaces

_fit_transform_data_container().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

tuple(fitted pipeline, data_container)

See also

DataContainer,ExecutionContext

-

handle_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Handle transform with a custom handler method for transforming the data container. The custom method to override is transform_data_container. The custom method transform_data_container replaces

_transform_data_container().- Parameters

data_container – the data container to transform

context (

ExecutionContext) – execution context

- Returns

transformed data container

See also

DataContainer,ExecutionContext

-

fit_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext)[source]¶ Custom fit data container method.

- Parameters

data_container – data container to fit on

context (

ExecutionContext) – execution context

- Returns

fitted self

-

fit_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext)[source]¶ Custom fit transform data container method.

- Parameters

data_container – data container to fit on

context (

ExecutionContext) – execution context

- Returns

fitted self, transformed data container

-

transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext)[source]¶ Custom transform data container method.

- Parameters

data_container – data container to transform

context (

ExecutionContext) – execution context

- Returns

transformed data container

-

class

neuraxle.base._HasHyperparamsSpace(hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None)[source]¶ Bases:

neuraxle.base.MixinForBaseServiceAn internal class to represent a step that has hyperparameter spaces of type

HyperparameterSpace. SeeBaseStep, for a complete step that can be transformed, and fitted inside aneuraxle.pipeline.Pipeline.-

__init__(hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_hyperparams_space(hyperparams_space: Union[Dict[KT, VT], neuraxle.hyperparams.space.HyperparameterSpace]) → neuraxle.base.BaseTransformer[source]¶ Set step hyperparameters space.

Example :

step.set_hyperparams_space(HyperparameterSpace({ 'hp': RandInt(0, 10) }))

- Parameters

hyperparams_space – hyperparameters space

- Returns

self

Note

This is a recursive method that will call

BaseStep._set_hyperparams_space()in the end.See also

HyperparameterSamples,HyperparameterSpace,HyperparameterDistribution:class:̀_HasChildrenMixin`,BaseStep.apply(),_HasChildrenMixin._apply(),_HasChildrenMixin._get_params()

-

_set_hyperparams_space(hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace) → neuraxle.hyperparams.space.HyperparameterSpace[source]¶

-

update_hyperparams_space(hyperparams_space: Union[Dict[KT, VT], neuraxle.hyperparams.space.HyperparameterSpace]) → neuraxle.base.BaseTransformer[source]¶ Update the step hyperparameter spaces without removing the already-set hyperparameters. This can be useful to add more hyperparameter spaces to the existing ones without flushing the ones that were already set.

Example :

step.set_hyperparams_space(HyperparameterSpace({ 'learning_rate': LogNormal(0.5, 0.5) 'weight_decay': LogNormal(0.001, 0.0005) })) step.update_hyperparams_space(HyperparameterSpace({ 'learning_rate': LogNormal(0.5, 0.1) })) assert step.get_hyperparams_space()['learning_rate'] == LogNormal(0.5, 0.1) assert step.get_hyperparams_space()['weight_decay'] == LogNormal(0.001, 0.0005)

- Parameters

hyperparams_space – hyperparameters space

- Returns

self

Note

This is a recursive method that will call

BaseStep._update_hyperparams_space()in the end.See also

update_hyperparams(),HyperparameterSpace

-

_update_hyperparams_space(hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace) → neuraxle.hyperparams.space.HyperparameterSpace[source]¶

-

-

class

neuraxle.base._HasHyperparams(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None)[source]¶ Bases:

neuraxle.base.MixinForBaseServiceAn internal class to represent a step that has hyperparameters of type

HyperparameterSamples. SeeBaseStep, for a complete step that can be transformed, and fitted inside aneuraxle.pipeline.Pipeline.Every step has hyperparemeters, and hyperparameters spaces that can be set before the learning process begins. Hyperparameters can not only be passed in the constructor, but also be set by the pipeline that contains all of the steps :

pipeline = Pipeline([ SomeStep() ]) pipeline.set_hyperparams(HyperparameterSamples({ 'learning_rate': 0.1, 'SomeStep__learning_rate': 0.05 }))

-

__init__(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_hyperparams(hyperparams: Union[Dict[KT, VT], neuraxle.hyperparams.space.HyperparameterSamples]) → neuraxle.base.BaseTransformer[source]¶ Set the step hyperparameters.

Example :

step.set_hyperparams(HyperparameterSamples({ 'learning_rate': 0.10 }))

- Parameters

hyperparams – hyperparameters

- Returns

self

Note

This is a recursive method that will call

_set_hyperparams().See also

HyperparameterSamples, :class:̀_HasChildrenMixin`,BaseStep.apply(),_HasChildrenMixin._apply(),_HasChildrenMixin._set_train()

-

_set_hyperparams(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples) → neuraxle.hyperparams.space.HyperparameterSamples[source]¶

-

update_hyperparams(hyperparams: Union[Dict[KT, VT], neuraxle.hyperparams.space.HyperparameterSamples]) → neuraxle.base.BaseTransformer[source]¶ Update the step hyperparameters without removing the already-set hyperparameters. This can be useful to add more hyperparameters to the existing ones without flushing the ones that were already set.

Example :

step.set_hyperparams(HyperparameterSamples({ 'learning_rate': 0.10 'weight_decay': 0.001 })) step.update_hyperparams(HyperparameterSamples({ 'learning_rate': 0.01 })) assert step.get_hyperparams()['learning_rate'] == 0.01 assert step.get_hyperparams()['weight_decay'] == 0.001

- Parameters

hyperparams – hyperparameters

- Returns

self

See also

HyperparameterSamples, :class:̀_HasChildrenMixin`,BaseStep.apply(),_HasChildrenMixin._apply(),_HasChildrenMixin._update_hyperparams()

-

_update_hyperparams(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples) → neuraxle.hyperparams.space.HyperparameterSamples[source]¶

-

get_hyperparams() → neuraxle.hyperparams.space.HyperparameterSamples[source]¶ Get step hyperparameters as

HyperparameterSamples.- Returns

step hyperparameters

Note

This is a recursive method that will call

_get_hyperparams().See also

HyperparameterSamples, :class:̀_HasChildrenMixin`,BaseStep.apply(),_HasChildrenMixin._apply(),_HasChildrenMixin._get_hyperparams()

-

set_params(**params) → neuraxle.base.BaseTransformer[source]¶ Set step hyperparameters with a dictionary.

Example :

s.set_params(learning_rate=0.1) hyperparams = s.get_params() assert hyperparams == {"learning_rate": 0.1}

:param arbitrary number of arguments for hyperparameters

Note

This is a recursive method that will call

_set_params()in the end.See also

HyperparameterSamples, :class:̀_HasChildrenMixin`,apply(),_apply(),_set_params()

-

get_params(deep=False) → dict[source]¶ Get step hyperparameters as a flat primitive dict. The “deep” parameter is ignored.

Example :

s.set_params(learning_rate=0.1) hyperparams = s.get_params() assert hyperparams == {"learning_rate": 0.1}

- Returns

hyperparameters

See also

HyperparameterSamples:class:̀_HasChildrenMixin`,apply(),apply(),_get_params()

-

-

class

neuraxle.base._HasSavers(savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Bases:

neuraxle.base.MixinForBaseServiceAn internal class to represent a step that can be saved. A step with savers is saved using its list of savers. Each saver saves some parts of the step.

A pipeline can save the step that need to be saved (see

save()) can be saved :step = Pipeline([ Identity() ]) step.save() step = step.load()

Or, it can also save a full dump that can be reloaded without any source code :

step = Identity().set_name('step_name') step.save(full_dump=True) step = ExecutionContext().load('step_name')

-

__init__(savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

invalidate() → neuraxle.base.BaseTransformer[source]¶ Invalidate a step, and all of its children. Invalidating a step makes it eligible to be saved again.

- A step is invalidated when any of the following things happen :

an hyperparameter has changed func: ~neuraxle.base._HasHyperparams.set_hyperparams

an hyperparameter space has changed func: ~neuraxle.base._HasHyperparamsSpace.set_hyperparams_space

a call to the fit method func:~neuraxle.base._FittableStep.handle_fit

a call to the fit_transform method func:~neuraxle.base._FittableStep.handle_fit_transform

the step name has changed func:~neuraxle.base.BaseStep.set_name

- Returns

self

Note

This is a recursive method used in :class:̀_HasChildrenMixin`.

See also

apply(),_apply()

-

get_savers() → List[neuraxle.base.BaseSaver][source]¶ Get the step savers of a pipeline step.

- Returns

step savers

See also

-

set_savers(savers: List[neuraxle.base.BaseSaver]) → neuraxle.base.BaseTransformer[source]¶ Set the step savers of a pipeline step.

- Returns

self

See also

-

add_saver(saver: neuraxle.base.BaseSaver) → neuraxle.base.BaseTransformer[source]¶ Add a step saver of a pipeline step.

- Returns

self

See also

-

should_save() → bool[source]¶ Returns true if the step should be saved. If the step has been initialized and invalidated, then it must be saved.

A step is invalidated when any of the following things happen :

a mutation has been performed on the step

mutate()an hyperparameter has changed func:~neuraxle.base._HasHyperparams.set_hyperparams

an hyperparameter space has changed func:~neuraxle.base._HasHyperparamsSpace.set_hyperparams_space

a call to the fit method func:~neuraxle.base._FittableStep.handle_fit

a call to the fit_transform method func:~neuraxle.base._FittableStep.handle_fit_transform

the step name has changed func:~neuraxle.base.BaseStep.set_name

- Returns

if the step should be saved

-

save(context: neuraxle.base.ExecutionContext, full_dump=True) → neuraxle.base.BaseTransformer[source]¶ Save step using the execution context to create the directory to save the step into. The saving happens by looping through all of the step savers in the reversed order.

Some savers just save parts of objects, some save it all or what remains. The

ExecutionContext.stripped_saver has to be called last because it needs a stripped version of the step.- Parameters

context (

ExecutionContext) – context to save fromfull_dump (

bool) – save full pipeline dump to be able to load everything without source code (false by default).

- Returns

self

See also

-

load(context: neuraxle.base.ExecutionContext, full_dump=True) → neuraxle.base.BaseTransformer[source]¶ Load step using the execution context to create the directory of the saved step. Warning:

- Parameters

context (

ExecutionContext) – execution context to load step fromfull_dump (

bool) – save full dump bool

- Returns

loaded step

Warning

Please do not override this method because on loading it is an identity step that will load whatever step you coded.

See also

-

-

class

neuraxle.base._CouldHaveContext[source]¶ Bases:

neuraxle.base.MixinForBaseServiceStep that can have a context. It has “has service assertions” to ensure that the context has registered all the necessary services.

A context can be injected with the with_context method:

context = ExecutionContext(root=tmpdir) service = SomeService() context.set_service_locator({SomeBaseService: service}) p = Pipeline([ SomeStep().assert_has_services(SomeBaseService) ]).with_context(context=context)

Or alternatively,

p = Pipeline([ RegisterSomeService(), SomeStep().assert_has_services_at_execution(SomeBaseService) ])

Context services can be used inside any step with handler methods:

class SomeStep(ForceHandleMixin, Identity): def __init__(self): Identity.__init__(self) ForceHandleMixin.__init__(self) def _transform_data_container(self, data_container: DataContainer, context: ExecutionContext): service: SomeBaseService = context.get_service(SomeBaseService) service.service_method(data_container.data_inputs) return data_container

See also

-

with_context(context: neuraxle.base.ExecutionContext) → neuraxle.base.StepWithContext[source]¶ An higher order step to inject a context inside a step. A step with a context forces the pipeline to use that context through handler methods. This is useful for dependency injection because you can register services inside the

ExecutionContext. It also ensures that the context has registered all the necessary services.context = ExecutionContext(tmpdir) context.set_service_locator(ServiceLocator().services) # where services is of type Dict[Type['BaseService'], 'BaseService'] p = WithContext(Pipeline([ # When the context will be processing the SomeStep, # it will be asserted that the context will be able to access the SomeBaseService SomeStep().with_assertion_has_services(SomeBaseService) ]), context)

See also

-

assert_has_services(*service_assertions) → neuraxle.base.GlobalyRetrievableServiceAssertionWrapper[source]¶ Set all service assertions to be made at the root of the pipeline and before processing the step.

- Parameters

service_assertions (List[Type]) – base types that need to be available in the execution context at the root of the pipeline

-

assert_has_services_at_execution(*service_assertions) → neuraxle.base.LocalServiceAssertionWrapper[source]¶ Set all service assertions to be made before processing the step.

- Parameters

service_assertions (List[Type]) – base types that need to be available in the execution context before the execution of the step

-

_assert_at_lifecycle(context: neuraxle.base.ExecutionContext)[source]¶ Assert that the context has all the services required to process the step. This method will be registred within a handler method’s _will_process or _did_process, or other lifecycle methods like these.

-

_assert(condition: bool, err_message: str, context: neuraxle.base.ExecutionContext = None)[source]¶ Assert that the

conditionis true. If not, raise an exception with themessage. The exception will be logged with the logger in thecontext. If thecontextis inExecutionPhase.PROD, the exception will not be raised and only logged.it is good to call assertions here in a context-dependent way. For more information on contextual validation, read Martin Fowler’s article on Contextual Validation.

- Parameters

condition (

bool) – condition to asserterr_message (

str) – message to log and raise if the condition is falsecontext (

ExecutionContext) – execution context to log the exception, and not raise it if inPRODmode.

-

_assert_equals(a: Any, b: Any, err_message: str, context: neuraxle.base.ExecutionContext)[source]¶ Assert that the

conditionis true. If not, raise an exception with themessage. The exception will be logged with the logger in thecontext. If thecontextis inExecutionPhase.PROD, the exception will not be raised and only logged.- Parameters

a – element to compare to b with ==

b – element to compare to a with ==

err_message (

str) – message to log and raise if the condition is falsecontext (

ExecutionContext) – execution context to log the exception, and not raise it if inPRODmode.

-

-

class

neuraxle.base.BaseTransformer(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None, config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None, name: str = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Bases:

neuraxle.base._CouldHaveContext,neuraxle.base._HasSavers,neuraxle.base._TransformerStep,neuraxle.base._HasHyperparamsSpace,neuraxle.base._HasHyperparams,neuraxle.base._HasSetupTeardownLifecycle,neuraxle.base.BaseService,abc.ABCBase class for a pipeline step that can only be transformed.

Every step has hyperparemeters, and hyperparameters spaces that can be set before the learning process begins (see

_HasHyperparams, and_HasHyperparamsSpacefor more info).Example usage :

class AddN(BaseTransformer): def __init__(self, add=1): super().__init__(hyperparams=HyperparameterSamples({'add': add})) def transform(self, data_inputs): if not isinstance(data_inputs, np.ndarray): data_inputs = np.array(data_inputs) return data_inputs + self.hyperparams['add'] def inverse_transform(self, processed_outputs): if not isinstance(data_inputs, np.ndarray): data_inputs = np.array(data_inputs) return data_inputs - self.hyperparams['add']

Note

All heavy initialization logic should be done inside the setup method (e.g.: things inside GPU), and NOT in the constructor.

-

__init__(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None, config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None, name: str = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_train(is_train: bool = True)[source]¶ This method overrides the method of BaseStep to also consider the wrapped step as well as self. Set pipeline step mode to train or test.

Note

This is a recursive method used in :class:̀_HasChildrenMixin`.

See also

apply(),_apply()_set_train()

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.BaseStep(hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None, config: Union[Dict[KT, VT], neuraxle.hyperparams.space.RecursiveDict] = None, name: str = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Bases:

neuraxle.base._FittableStep,neuraxle.base.BaseTransformer,abc.ABCBase class for a transformer step that can also be fitted.

If a step is not fittable, you can inherit from

BaseTransformerinstead. If a step is not transformable, you can inherit fromNonTransformableMixin. A step should only change its state insidefit()orfit_transform()(see_FittableStepfor more info). Every step has hyperparemeters, and hyperparameters spaces that can be set before the learning process begins (see_HasHyperparams, and_HasHyperparamsSpacefor more info).Example usage :

class Normalize(BaseStep): def __init__(self): BaseStep.__init__(self) self.mean = None self.std = None def fit( self, data_inputs: ARG_X_INPUTTED, expected_outputs: ARG_Y_EXPECTED = None ) -> 'BaseStep': self._calculate_mean_std(data_inputs) return self def _calculate_mean_std(self, data_inputs: ARG_X_INPUTTED): self.mean = np.array(data_inputs).mean(axis=0) self.std = np.array(data_inputs).std(axis=0) def fit_transform( self, data_inputs: ARG_X_INPUTTED, expected_outputs: ARG_Y_EXPECTED = None ) -> Tuple['BaseStep', ARG_Y_PREDICTD]: self.fit(data_inputs, expected_outputs) return self, (np.array(data_inputs) - self.mean) / self.std def transform(self, data_inputs: ARG_X_INPUTTED) -> ARG_Y_PREDICTD: if self.mean is None or self.std is None: self._calculate_mean_std(data_inputs) return (np.array(data_inputs) - self.mean) / self.std p = Pipeline([ Normalize() ]) p, outputs = p.fit_transform(data_inputs, expected_outputs)

See also

BaseTransformer,_TransformerStep,NonFittableMixin,NonTransformableMixinExecutionContext,Pipeline,-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.MixinForBaseTransformer[source]¶ Bases:

objectAny steps/transformers within a pipeline that inherits of this class should implement BaseStep/BaseTransformer and initialize it before any mixin. This class checks that its the case at initialization.

-

class

neuraxle.base.MetaStepMixin(wrapped: BaseServiceT = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Bases:

neuraxle.base.MixinForBaseTransformer,neuraxle.base.MetaServiceMixinA class to represent a step that wraps another step. It can be used for many things. For example,

ForEachDataInputadds a loop before any calls to the wrapped step :class ForEachDataInput(MetaStepMixin, BaseStep): def __init__( self, wrapped: BaseStep ): BaseStep.__init__(self) MetaStepMixin.__init__(self, wrapped) def fit(self, data_inputs: ARG_X_INPUTTED, expected_outputs: ARG_Y_EXPECTED = None) -> 'BaseStep': if expected_outputs is None: expected_outputs = [None] * len(data_inputs) for di, eo in zip(data_inputs, expected_outputs): self.wrapped = self.wrapped.fit(di, eo) return self def transform(self, data_inputs: ARG_X_INPUTTED) -> ARG_Y_PREDICTD: outputs = [] for di in data_inputs: output = self.wrapped.transform(di) outputs.append(output) return outputs def fit_transform( self, data_inputs: ARG_X_INPUTTED, expected_outputs: ARG_Y_EXPECTED = None ) -> Tuple['BaseStep', ARG_Y_PREDICTD]: if expected_outputs is None: expected_outputs = [None] * len(data_inputs) outputs = [] for di, eo in zip(data_inputs, expected_outputs): self.wrapped, output = self.wrapped.fit_transform(di, eo) outputs.append(output) return self, outputs

See also

ForEachDataInput,StepClonerForEachDataInput-

__init__(wrapped: BaseServiceT = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

handle_fit_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶

-

handle_transform(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶

-

_fit_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶

-

_fit_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseStep[source]¶

-

_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶

-

_inverse_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT][source]¶

-

-

class

neuraxle.base.MetaStep(wrapped: BaseServiceT = None, hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None, name: str = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Bases:

neuraxle.base.MetaStepMixin,neuraxle.base.BaseStep-

__init__(wrapped: BaseServiceT = None, hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = None, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = None, name: str = None, savers: List[neuraxle.base.BaseSaver] = None)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.MetaStepJoblibStepSaver[source]¶ Bases:

neuraxle.base.JoblibStepSaverCustom saver for meta step mixin.

-

save_step(step: neuraxle.base.MetaStep, context: neuraxle.base.ExecutionContext) → neuraxle.base.MetaStep[source]¶ Save MetaStepMixin.

# . Save wrapped step. # . Strip wrapped step form the meta step mixin. # . Save meta step with wrapped step savers.

- Return type

- Parameters

step – meta step to save

context (

ExecutionContext) – execution context

- Returns

-

load_step(step: neuraxle.base.MetaStep, context: neuraxle.base.ExecutionContext) → neuraxle.base.MetaStep[source]¶ Load MetaStepMixin.

# . Loop through all of the sub steps savers, and only load the sub steps that have been saved. # . Refresh steps

- Parameters

step – step to load

context (

ExecutionContext) – execution context

- Returns

loaded truncable steps

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.NonFittableMixin[source]¶ Bases:

neuraxle.base.MixinForBaseTransformerA pipeline step that requires no fitting: fitting just returns self when called to do no action. Note: fit methods are not implemented

-

_fit_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.base.BaseStep[source]¶

-

_fit_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → Tuple[neuraxle.base.BaseStep, neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]]][source]¶

-

-

class

neuraxle.base.NonTransformableMixin[source]¶ Bases:

neuraxle.base.MixinForBaseTransformerA pipeline step that has no effect at all but to return the same data without changes. Transform method is automatically implemented as changing nothing.

Example :

class PrintOnFit(NonTransformableMixin, BaseStep): def __init__(self): BaseStep.__init__(self) def fit(self, data_inputs: ARG_X_INPUTTED, expected_outputs: ARG_Y_EXPECTED = None) -> BaseStep: print((data_inputs, expected_outputs)) return self

Note

Fit methods are not implemented.

-

_fit_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext)[source]¶

-

_transform_data_container(data_container: neuraxle.data_container.DataContainer[~IDT, ~DIT, ~EOT][IDT, DIT, EOT], context: neuraxle.base.ExecutionContext) → neuraxle.data_container.DataContainer[~IDT, ~DIT, typing.Union[~EOT, NoneType]][IDT, DIT, Optional[EOT]][source]¶ Do nothing - return the same data.

- Parameters

data_container – data container

context (

ExecutionContext) – execution context

- Returns

data container

-

-

class

neuraxle.base.TruncableJoblibStepSaver[source]¶ Bases:

neuraxle.base.JoblibStepSaverStep saver for a TruncableSteps. TruncableJoblibStepSaver saves, and loads all of the sub steps using their savers.

See also

-

save_step(step: neuraxle.base.TruncableSteps, context: neuraxle.base.ExecutionContext)[source]¶ # . Loop through all the steps, and save the ones that need to be saved. # . Add a new property called sub step savers inside truncable steps to be able to load sub steps when loading. # . Strip steps from truncable steps at the end.

- Parameters

step – step to save

context (

ExecutionContext) – execution context

- Returns

-

load_step(step: neuraxle.base.TruncableSteps, context: neuraxle.base.ExecutionContext) → neuraxle.base.TruncableSteps[source]¶ # . Loop through all of the sub steps savers, and only load the sub steps that have been saved. # . Refresh steps

- Parameters

step – step to load

context (

ExecutionContext) – execution context

- Returns

loaded truncable steps

-

_abc_impl= <_abc_data object>¶

-

-

class

neuraxle.base.TruncableStepsMixin(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]], mute_step_renaming_warning: bool = True)[source]¶ Bases:

neuraxle.base._HasChildrenMixinA mixin for services that can be truncated.

-

__init__(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]], mute_step_renaming_warning: bool = True)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

set_steps(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]], invalidate=True) → neuraxle.base.TruncableStepsMixin[source]¶ Set steps as tuple.

- Parameters

steps_as_tuple – list of tuple containing step name and step

- Returns

-

get_children() → List[BaseServiceT][source]¶ Get the list of sub step inside the step with children.

- Returns

children steps

-

_wrap_non_base_steps(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]]) → List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]][source]¶ If some steps are not of type BaseStep, we’ll try to make them of this type. For instance, sklearn objects will be wrapped by a SKLearnWrapper here.

- Parameters

steps_as_tuple – a list of steps or of named tuples of steps (e.g.: NamedStepsList)

- Returns

a NamedStepsList

-

_patch_missing_names(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]]) → List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]][source]¶ Make sure that each sub step has a unique name, and add a name to the sub steps that don’t have one already.

- Parameters

steps_as_tuple – a NamedStepsList

- Returns

a NamedStepsList with fixed names

-

_rename_step(step_name, class_name, names_yet: set)[source]¶ Rename step by adding a number suffix after the class name. Ensure uniqueness with the names yet parameter.

- Parameters

step_name – step name

class_name – class name

names_yet (

set) – names already taken

- Returns

new step name

-

_refresh_steps(invalidate=True)[source]¶ Private method to refresh inner state after having edited

self.steps_as_tuple(recreateself.stepsfromself.steps_as_tuple).

-

-

class

neuraxle.base.TruncableSteps(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]], hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = {}, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = {}, mute_step_renaming_warning: bool = True)[source]¶ Bases:

neuraxle.base.TruncableStepsMixin,neuraxle.base.BaseStep,abc.ABCStep that contains multiple steps.

Pipelineinherits form this class. It is possible to truncate this step *__getitem__()self.steps contains the actual steps

self.steps_as_tuple contains a list of tuple of step name, and step

See also

-

__init__(steps_as_tuple: List[Union[Tuple[str, BaseTransformerT], BaseTransformerT]], hyperparams: neuraxle.hyperparams.space.HyperparameterSamples = {}, hyperparams_space: neuraxle.hyperparams.space.HyperparameterSpace = {}, mute_step_renaming_warning: bool = True)[source]¶ Initialize self. See help(type(self)) for accurate signature.

-

append(item: Tuple[str, BaseTransformer]) → neuraxle.base.TruncableSteps[source]¶ Add an item to steps as tuple.

- Parameters

item – item tuple (step name, step)

- Returns

self

-

popitem(key=None) → Tuple[str, neuraxle.base.BaseTransformer][source]¶ Pop the last step, or the step with the given key

- Parameters

key – step name to pop, or None

- Returns

last step item

-

popfrontitem() → Tuple[str, neuraxle.base.BaseTransformer][source]¶ Pop the first step.

- Returns

first step item

-

split(step_type: type) → List[neuraxle.base.TruncableSteps][source]¶ Split truncable steps by a step class (type).

- Parameters

step_type (

type) – step class type to split on.- Returns

list of truncable steps containing the splitted steps

-

ends_with(step_type: Type[CT_co]) → bool[source]¶ Returns true if truncable steps end with a step of the given type.

- Return type

bool- Parameters